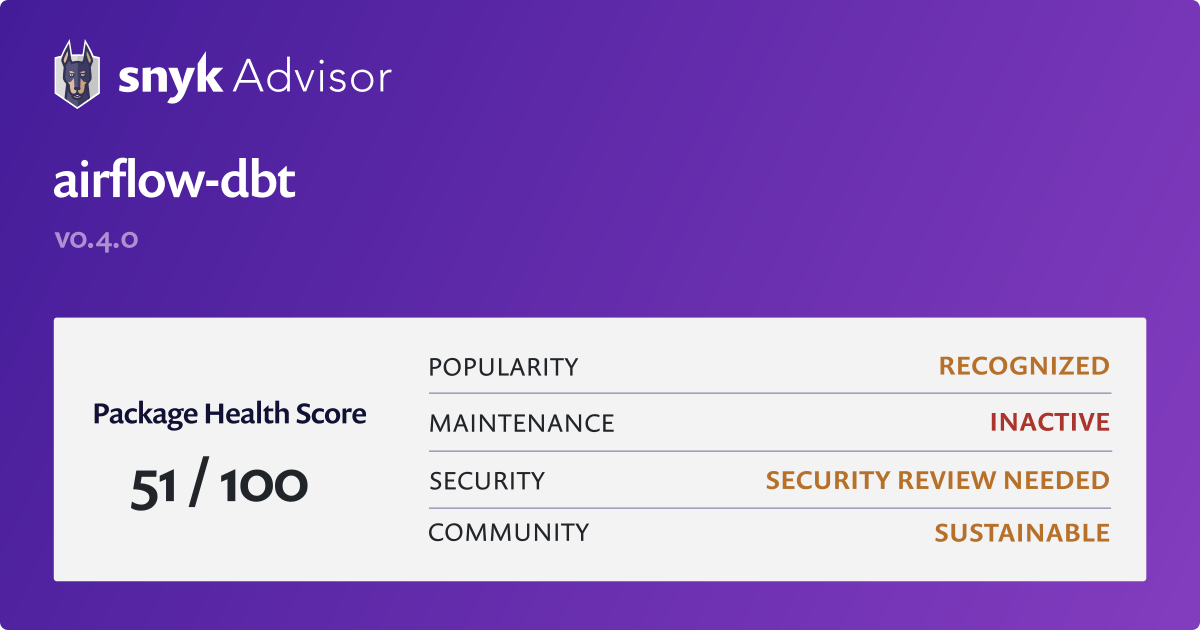

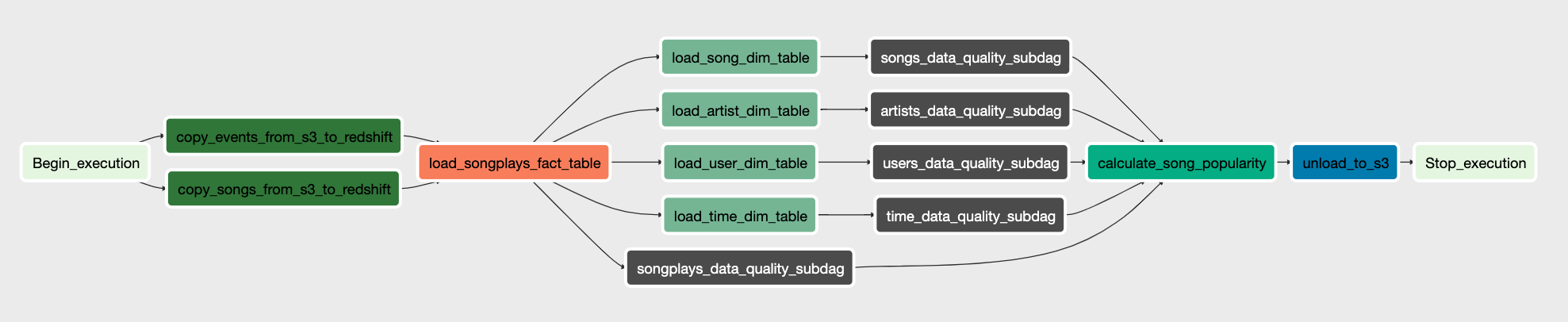

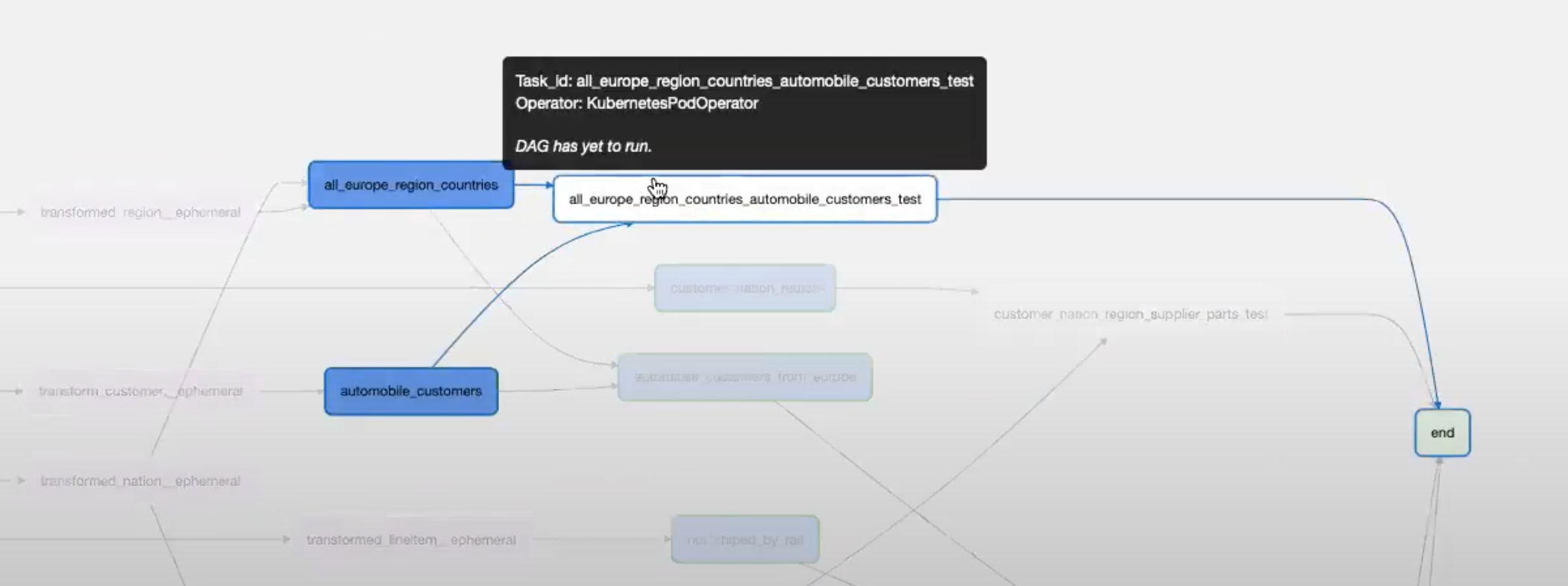

It's time to decompose Airflow, but before we talk about decomposition, let's talk about why we need Airflow still instead of replacing it.Īirflow is a competent orchestrator, i.e., workflow manager. In Airflow, all these must be developed in Python, but it is undoubtedly a big challenge for those who do not know how to program. defining the upstream and downstream dependencies of the DAG.implementation of SQL calls through PythonOperator or the corresponding database operator.database connections, including credentials, connection pools, etc.Traditionally, in an Airflow-based data pipeline, although most of the business logic is made up of SQL, there is a lot of code that must surround the SQL in order to make it work. The emergence of such a role brings a new perspective into the data world. Well, how to develop a data pipeline without programming? Thanks to the various tools in the data ecosystem, especially dbt, even if you don't know programming, you can still implement data pipelines as long as you are familiar with SQL. They are also responsible for managing the lifecycle of the data pipeline, but the biggest difference between them and data engineers is their lack of the concept of distributed architecture and the ability to develop programs. So, what is the position of an Analytical Engineer? ML Engineer: This role is interesting and has a different position in each organization, but in general, work related to machine learning, such as tagging data, data cleansing, data normalization will be involved, so there are opportunities to develop ETL/ELT, but the main responsibility is still the framework and model of machine learning, of course, is mainly in Python.Very good at using SQL and various data visualization tools. Data Analyst: End-user of data, using already structured data for analysis in various business situations.They are mainly proficient in distributed architectures, programming (mainly Python), and data storage and its declarative language (mainly SQL). they are the main developers of ETL and ELT. Data Engineers: are responsible for developing and managing the entire data pipeline lifecycle, i.e.Let's briefly describe the responsibilities and capabilities of these roles. In addition to the traditional Data Engineer, Data Analyst and ML Engineer, a new role has recently become more common and is called Analytical Engineer. There are many tools that improve on Airflow's shortcomings, such as Dagster, but the fact of the monolith remains unresolved.Īlthough Airflow has been widely used in a diversity of data engineering domains, the role of the data domain has been further differentiated as the domain has become increasingly popular. Another problem is that Airflow is a distributed framework and it is not easy to verify a complete workflow in local development.

When workflows are continuously added, this monolith will sooner or later become a big ball of mud.

These advantages make Airflow the preferred choice for data engineers working on ETL or ELT, although there are many other frameworks for orchestrating workflows, such as Argo workflow, but for engineers who rely heavily on Python development, Airflow is much easier to harness and maintain.īut Airflow is not without drawbacks, one of the biggest problems is it is a monolith. In addition, the Airflow community is quite active, and many operators are constantly added to the ecosystem.

Therefore, developers can develop in Python based on the various operators provided by Airflow. It is a highly scalable and available framework for orchestrating, and more importantly, it is developed in Python. Airflow is one of the great orchestrator today.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed